Machine Vision for Flexible Robotic Automation

The synergies vision guided robotics (VGR) bring to the shop floor are numerous. Learn about the types of VGR systems and the trending uses for robotic automation.

Posted: December 18, 2021

ADVANCING AUTOMATION

By Sarah Mellish

To handle the growing complexity of customer requirements and the uptick in product differentiation that is often needed, company leaders continue to turn to flexible automation. As a result, easy-to-use and highly efficient robots are being added to existing lines, and entire robotic systems are being implemented to expertly manage the versatility, capacity and repeatability required to remain competitive.

Bolstering the functionality and subsequent popularity of these innovative solutions is the use of vision guided robotics (VGR). The use of VGR is highly advantageous, helping to ensure product quality, reduce machine downtime, tighten process control and increase overall productivity.

Vision guided robotics is a continuum, and it is important for manufacturers to have a clear understanding of the application process so they can determine which type of system to use.

2D VISION

The most common form of machine vision used in manufacturing, 2D vision occurs in a single plane (X, Y) where part depth data is not required, and it uses grayscale or color imaging to create a two-dimensional map, showing anomalies or variation in part contrast. To work effectively, objects should be presented on a flat surface, and be of a consistent shape and size.

Advancements in computer and camera technology continue to pave the way for this technology, increasing pixel count and processing speeds for cameras and sensors alike. This accelerated degree of optical efficiency has made the use of 2D machine vision more reliable, optimizing functionality, quality, reliability, and useability for a range of tasks like metrology, barcode reading, label inspection, basic positional verification, and surface marking detection.

Manufacturers looking to use VGR, especially for pick and place, are encouraged to first consider this technology (i.e., area cameras and line scanners), as it is often more cost-effective than 3D vision. However, 2D vision systems can sometimes be challenging to program and set up, and there are times when it simply will not suffice.

3D VISION

Required for workpieces that vary in height, pitch or roll, 3D vision uses height measurement to learn more about an object so that a robot can quickly and easily recognize a part and control it as programmed. This form of VGR technology (i.e., structured light imaging, time-of-flight/indirect time-of-flight sensing, stereo cameras, and laser profilers) eliminates the need for pre-arranging parts prior to being located and moved by the robot, making it a viable asset for many applications.

Diverse improvements in camera technology and processing software have helped to overcome obstacles that 2D technology could not solve. This is especially true for random bin picking, particularly for stacked objects. 3D vision systems can expertly sense randomly placed parts and determine how to handle them through the use of structured, integrated lighting and 3D CAD matching, equipping a robotic system with the complete ability to find, recognize and pick an object without the need for programming at the system level.

Before considering a vision solution, users must have a clear understanding of what the technology can do and what benefits may be gained, keeping in mind that the simpler solution is usually best. Concepts such as available lighting, environment texture and system calibration should be weighed before implementation.

TRENDING ROBOTIC VISION USES

- Part Inspection: Providing capability and consistency that humans cannot, the use of robots and their peripherals for part testing and inspection can reduce scrap, maximize good parts, and optimize overall equipment effectiveness. Whether carried out to ensure the surface quality, weld integrity or part geometry of an in-house workpiece, or if the process is performed to check the caliber of parts being brought in from another tier of the supply chain, it can be highly beneficial.

Coupled with the right robot and software, testing and inspection can be achieved with the use of sensors, eddy current testers, laser scanners, thermal imaging devices, infrared scanners, and 2D and 3D vision systems. The latter vision functionality provides powerful tools and macros for quick and easy recognition of parts, facilitating greater product throughput. - Seam Finding: To optimize weld quality and increase product throughput, manufacturers continue to implement high-speed seam finding technologies. From through-wire touch sensing and laser scanning, to laser line scanning and 2D vision, multiple solutions exist. The 2D vision option is being used to address demanding cycle times for structured and semi-randomly placed parts. Combined with leading pattern recognition software, comprehensive solutions like Yaskawa’s MotoSight™ 2D are allowing robot users to capture the location and orientation of a part in seconds without adding extra test points.

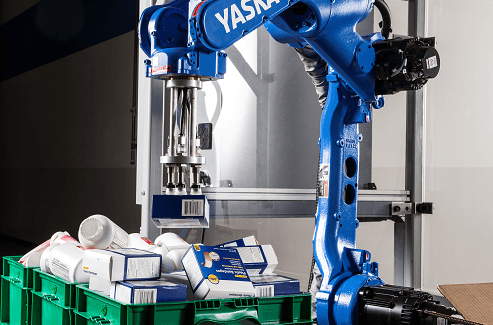

- Parcel Sortation: Research and development for artificial intelligence (AI) has helped to unlock massive potential for retailers. Versatile robots equipped with intelligent 3D vision and interactive motion control can now determine the shape of each object and understand how to retrieve it. This enables adaptive solutions that can quickly and reliably pick a vast array of objects with little to no human intervention. Highly important to addressing the physical modifications required to meet supply chain variability and on-time delivery, robotic systems that efficiently manage environmental changes in real time will continue to permeate order fulfillment facilities.

- Random Depalletization: The use of deep-learning 3D vision for industrial robots is being used for the random depalletization of multi-SKU (Stock Keeping Unit), single-SKU and random pallets. Using a browser-based graphical user interface (GUI) and a high-resolution 3D sensor (or 2D sensor in some cases) with advanced motion planning, a highly functional robotic system can be configured in a matter of hours.

- Material Handling: Industrial and collaborative robots equipped with vision systems, LIDAR sensors, custom end-of-arm tooling (EOAT) and more are being mounted on extremely flexible autonomous mobile robots (AMRs) to meet the uptick in process demand. Completing tasks with a high degree of skill, these mobile robotic platforms maneuver independently through a facility to the task to which they are assigned, performing jobs such as picking, sorting, machine tending and on-demand material transport. Overall, the use of AMRs facilitates multi-shift operation, minimizes manual product transfer damage, and optimizes product workflow.

From “scan and plan” offline path programming to application breakthroughs for welding, handling and more, vision guided robotics holds great potential